NEW DELHI— Indian politicians, no strangers to spreading fake news themselves, are worried that Deepfake technology will widen the transmission of misinformation. In Parliament, India’s IT Minister Ravi Shankar Prasad said the IT ministry and the Ministry of Home Affairs are in regular touch with social media platforms to address the issue, under the provisions of the IT Act.

“Social media platforms have implemented initiatives to address the issue of fake news on their platform, such as limiting forwards and promoting fact checking,” Prasad noted. There is also a dedicated website for information security awareness, and a Twitter handle called Cyber Dost, which are used to spread awareness on cyber safety and cyber security

For the latest news and more, follow HuffPost India on Twitter, Facebook, and subscribe to our newsletter.

Prasad’s own Bharatiya Janata Party, it is worth noting, is one of India’s best financed propagators of fake news, with its own dedicated troll army and an in-house political “consultancy” called the Association of Billion Minds—that its own party workers insist doesn’t exist.

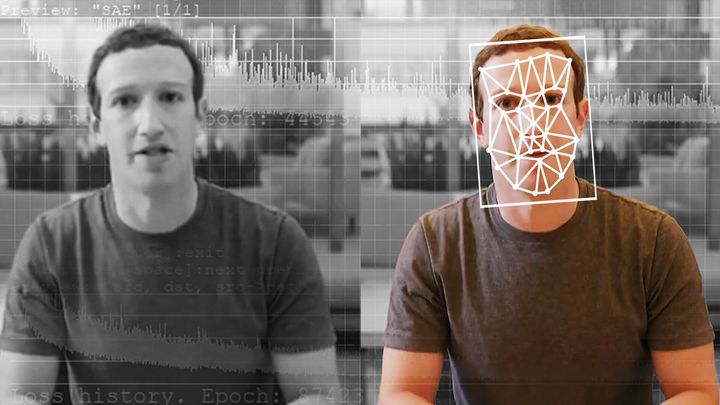

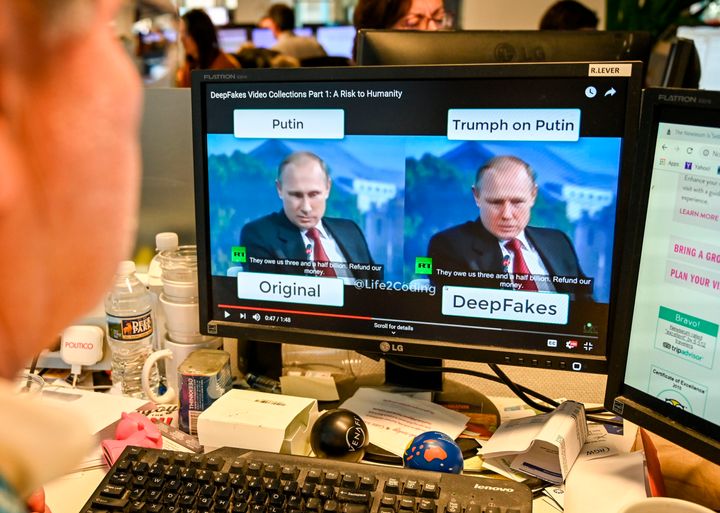

Politicians are worried that it could be used to spread fake news, and in response to a question in Parliament, India’s IT Minister Ravi Shankar Prasad responded that the government is aware of deep fake technology which can be used to “create the image or video of a person with any other person’s image or video using artificial neural networks and deep learning/ machine learning techniques.”

Deepfake technology uses open-source machine learning tools like Google’s TensorFlow AI technology, and open-source libraries, along with freely available data like stock photos and YouTube videos, to create life-like videos indistinguishable from the real thing.

Here, for instance, is a fairly harmless example of deepfake technology being used to place Keanu Reeves in the movie Singham:

The technology has been around for years, and — in an unforeseen flipside — has actually allowed politicians to evade responsibility for their actions by popularising the notion that even inconvenient real news, is actually “fake.”

Meanwhile, the real victims are overwhelmingly women targeted by harassers who are using the technology to embed the faces of their victims into porn clips, thereby creating a terrifying new form of Deepfake revenge pornography.

The recent flurry of conversation around DeepFakes illustrates how technologies that have been used to target women and minorities for years, catch public attention only when influential groups like male politicians feel threatened.

Deep panic

Governments across the world have expressed concerns about the misuse of the technology.

In the US, the Pentagon and its research agency, the Defense Advanced Research Projects Agency (DARPA), announced their commitment to researching various ways to combat the new phenomenon. A handful of US states have introduced bills around deepfakes as well.

One prominent bill, introduced by Senator Bryan Hughes (REP, Texas), criminalises the act of creating a deepfake video to “injure a candidate or influence the result of an election.”

“Obviously there are going to be some free speech concerns, so for our purposes we wanted to make this pretty narrow and focus on elections,” Hughes said. “Our attempt here is to create a pretty narrow remedy so that if you use this technology with the intent to influence an election then you will be held accountable for it.”

“Deepfakes can be made by anyone with a computer, internet access, and interest in influencing an election,” John Villasenor, a professor at UCLA focusing on artificial intelligence and cybersecurity, told CNBC. He explained that “they are a powerful new tool for those who might want to (use) misinformation to influence an election.” Paul Barrett, adjunct professor of law at New York University, added that “a skillfully made deepfake video could persuade voters that a particular candidate said or did something she didn’t say or do.”

Yet, the majority of victims of deepfakes are women, and misogyny is clearly a driving factor, even in cases with a political intent.

For instance, investigative journalist Rana Ayyub told HuffPost she was targeted by deepfakes after she spoke out in condemnation of the rape of an eight-year-old Kashmiri girl.

“When I first opened it, I was shocked to see my face, but I could tell it wasn’t actually me because, for one, I have curly hair and the woman had straight hair. She also looked really young, not more than 17 or 18,” Ayyub wrote. The police did not register Ayyub’s case, and six months after the video reached her, she gave her statement to a magistrate. Even then, nothing happened, she said. “I get called Jihadi Jane, Isis Sex Slave, ridiculous abuse laced with religious misogyny.”

Deepfake technology first gained notoriety with videos of celebrities, and in India too, there were videos of actresses like actresses Priyanka Chopra, Shraddha Kapoor, and Deepika Padukone. This is also because the AI needs hundreds of images to “train” and map faces, which are most readily available for celebrities.

Before sites like Reddit and Pornhub started to remove deepfakes, Indian users were sharing links of the “Priyanka gif” and the “Katrina vid”, even in the middle of a discussion about its potential impact on politics. Even today, sites exist with videos that have titles like ‘Aishwarya Rai Bollywood POV queen’, and ‘Doctor Cheating Yami Gautam’.

New apps like Zao show how the technology could soon work with just a few images — the app’s creators have restricted its use to add users’ faces to select clips from films and TV, but others will not show the same restraint.

In the West, it’s being used for more than celebrities, and revenge fake porn is a growing concern. But while deepfake porn continues to upend women’s lives, there still exists no criminal recourse for victims.

While the American Congress, and the Indian parliament are worried about how this technology will be used to affect elections and attack politicians though, the real threat lies closer to home. Research from cyber-security company Deeptrace found that the vast majority of deepfakes are porn. Deeptrace’s study showed that 96% of deepfake clips featuring a computer-generated face replacing that of a porn actor. Experts say the more prolific this technology becomes – and the more accessible and easy to use – the greater the threat to non high-profile people..

Henry Ajder, head of research analysis at Deeptrace, told the BBC: “The debate is all about the politics or fraud and a near-term threat, but a lot of people are forgetting that deepfake pornography is a very real, very current phenomenon that is harming a lot of women.”